- SnapLogic - Integration Nation

- Patterns

- Move data from Database to Kafka and then to Amazo...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Move data from Database to Kafka and then to Amazon S3 Storage

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-27-2018 10:13 AM

Contributed by @pkona

There are two pipelines in this pattern. The first pipeline extracts data from a Database and publishes to a Kafka Topic. The second pipeline consumes from the Kafka Topic and ingests into the Amazon S3 Storage by calling a third pipeline.

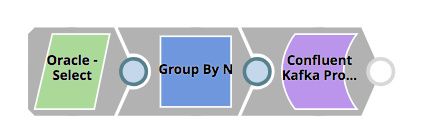

Publish to Kafka

Source: Oracle

Target: Kafka

Snaps used: Oracle Select, Group By N, Confluent Kafka Producer

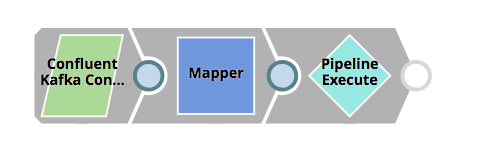

Consume from Kafka to S3

Source: Kafka

Target: Pipeline Execute

Snaps used: Confluent Kafka Consumer, Mapper, Pipeline Execute (calling Write file to S3 pipeline)

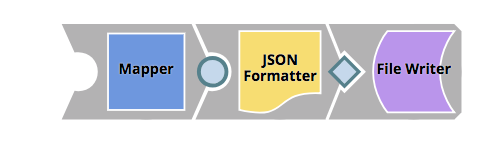

Write file to S3

Source: Kafka

Target: Amazon S3 Storage

Snaps used: Mapper, JSON Formatter, File Writer

Downloads

Publish to Kafka.slp (5.4 KB)

Consume from Kafka to S3.slp (5.4 KB)

Write file to S3.slp (4.5 KB)

- Ingesting multiple AWS S3 files into a database in Designing and Running Pipelines

- Launched: SnapLogic ETL Snap Pack for Databricks Delta Lake in Release Notes and Announcements

- Using JWT to Authenticate to Box from SnapLogic in Designing and Running Pipelines

- Ingest data from SQL Server (RDBMS) to AWS Cloud Storage (S3) in Patterns

- Ingest data from NoSQL Database (MongoDB) into AWS Cloud Storage (S3) in Patterns