- SnapLogic - Integration Nation

- Designing and Running Pipelines

- Populate Records

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Populate Records

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-24-2019 06:09 AM

Hi All,

Need some suggestion for below implementation ,

Can someone help me with ideas or suggestions?

thanks in advance

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-24-2019 10:26 AM

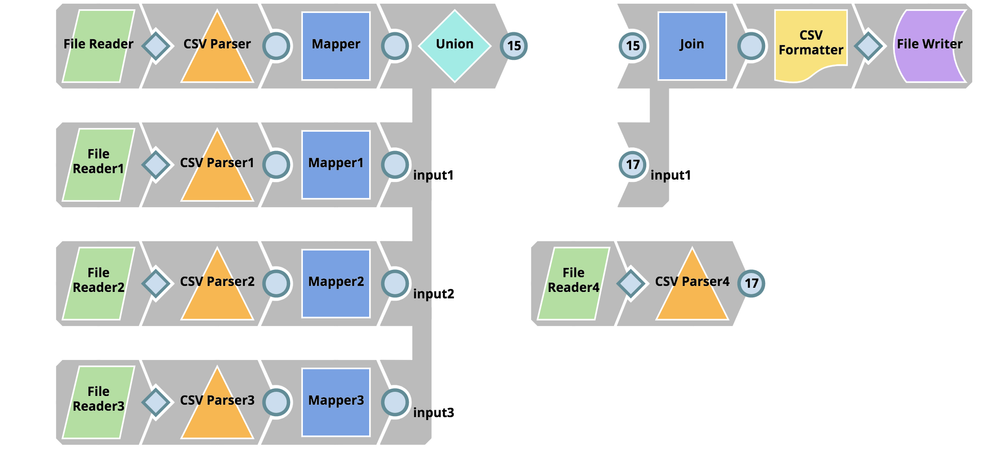

You can do the following. I have written it using SQL for ease of representation , but you can transform them into Snaps

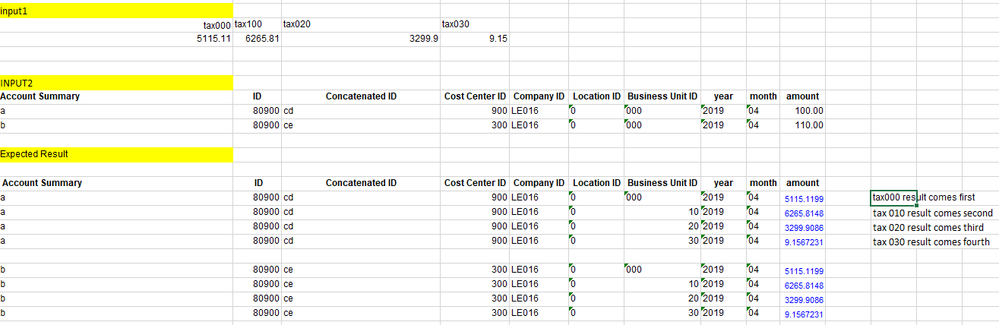

SELECT Account_Summary, ID, Concatenated_ID, Cost_Center_ID, Company_ID, Location_ID, Business_Unit_ID, year, unpivot1.amount

FROM input2,

(SELECT tax000 FROM input1

UNION ALL

SELECT tax100 FROM input1

UNION ALL

SELECT tax020 FROM input1

UNION ALL

SELECT tax030 FROM input1) unpivot1 (amount)

I have attached a sample pipeline image that indicates how the above SQL can be translated into Snaps. The FileReader through FileReader3 Snaps are doing the reads from the input2 table and the FileReader4 snap is doing the read from the input1 table.

Hope this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-25-2019 12:34 AM

Hi @psathyanarayan can you share pipeline also i need to populate business unit id as 000,10,20,30 for each records.

first records will be tax000 amount and business unit ID 000 and input 2 details ,

second records will be tax100 amount and business unit id 100 and input 2 details .

like that for third and fourth recods.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-25-2019 01:25 AM

Hi @psathyanarayan Attaching screen shot for more clear requirement

input 1

input 2

expected results for 1st record in input 2

similarly we should get for other records in input 2

each records from input 1 should get multiple with each records in input 2

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

05-25-2019 01:55 PM

Hello @Ajay_Chawda,

Your initial use case is different from the current use case that you sent me later. In the latest use case that you sent out there is no need to switch columns to rows. Hence there is no need to use UNION etc.

I have attached the pipeline and the two CSV files input1.txt and input2.txt that will address your latest requirement use case. You would need to modify the File Reader Snaps configuration to the place where you save your input files as they are all currently pointing to my local system.

Hope this helps.

Community_Case2_2019_05_25.slp (13.9 KB)

input1.txt (110 Bytes)

input2.txt (219 Bytes)

- Slicing Data from JSON in Designing and Running Pipelines

- Coupa API Pagination with Dates in Service URL in Designing and Running Pipelines

- Need to know all the columns in input pipeline using expression in mapper snap in Designing and Running Pipelines

- Need all the columns pipeline using expression in mapper snap. in Designing and Running Pipelines

- In-memory lookup or Join functionality not working as expected. in Designing and Running Pipelines