- SnapLogic - Integration Nation

- Snaps Packs

- Remove duplicate values from the JSON array

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2020 06:50 AM

Hello All,

Hi, I have a JSON array and I have to remove the duplicates based on a field and then I want the non duplicate elements in one array and the duplicate values in an another array.

Input JSON:

[

{“pName”: “abc”,“iNumber”: 123 },

{“pName”: “def”,“iNumber”: 123 },

{“pName”: “xyz”,“iNumber”: 890 },

{“pName”: “jkl”,“iNumber”: 456 }

]

Required Output:

[

{“pName”: “abc”,“iNumber”: 123 },

{“pName”: “def”,“iNumber”: 123 }

]

[

{“pName”: “xyz”,“iNumber”: 890 },

{“pName”: “jkl”,“iNumber”: 456 }

]

Two separate JSON arrays one with non duplicate iNumber and another with the one which were duplicates.

I tried filter((item, pos, a) => a.findIndex(elem => item.iNumber == elem.iNumber) == pos), it didn’t give out the required result it still keeps one of the duplicate values.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2020 04:28 PM

Try using the filter method

e.g.

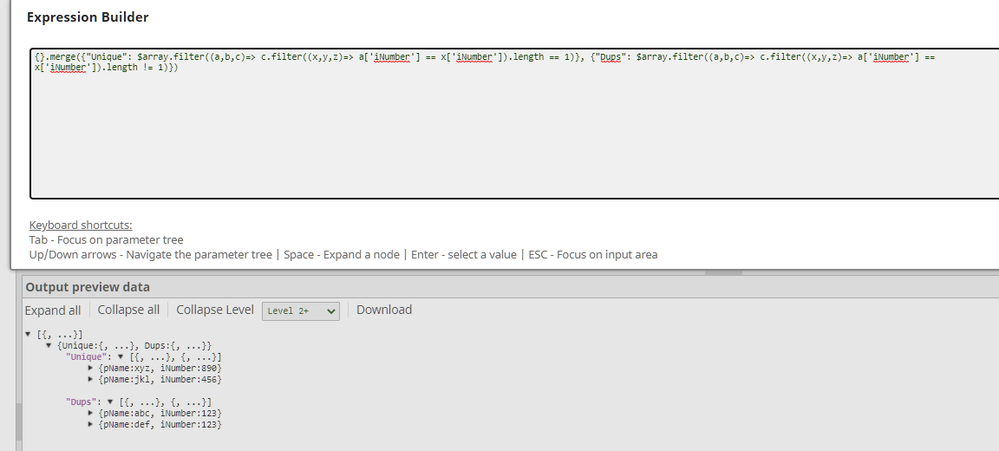

{}.merge({“Unique”: $array.filter((a,b,c)=> c.filter((x,y,z)=> a[‘iNumber’] == x[‘iNumber’]).length == 1)}, {“Dups”: $array.filter((a,b,c)=> c.filter((x,y,z)=> a[‘iNumber’] == x[‘iNumber’]).length != 1)})

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2020 10:40 AM

Have you tried the Group by Fields Snap?

Diane Miller

Community Manager

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-28-2020 04:28 PM

Try using the filter method

e.g.

{}.merge({“Unique”: $array.filter((a,b,c)=> c.filter((x,y,z)=> a[‘iNumber’] == x[‘iNumber’]).length == 1)}, {“Dups”: $array.filter((a,b,c)=> c.filter((x,y,z)=> a[‘iNumber’] == x[‘iNumber’]).length != 1)})

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-29-2020 01:43 AM

@alchemiz Thanks a lot. It worked.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-30-2020 11:36 PM

Glad to be of help 😀