- SnapLogic - Integration Nation

- Designing and Running Pipelines

- Snaplogic Loop

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Snaplogic Loop

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-22-2020 11:25 AM

Hello fellow Snaplogicians,

I am working on a pipeline that must read in 1 to many rows about a single entity, each one sequentially, and apply the results to a single document. Each row may not change the document, but represent a possible change.

Generating the initial document is easy enough as I can select the first entry of the table, but generating the subsequent entities is trickier as there could be 0 more entries, 10000 more entries, etc.

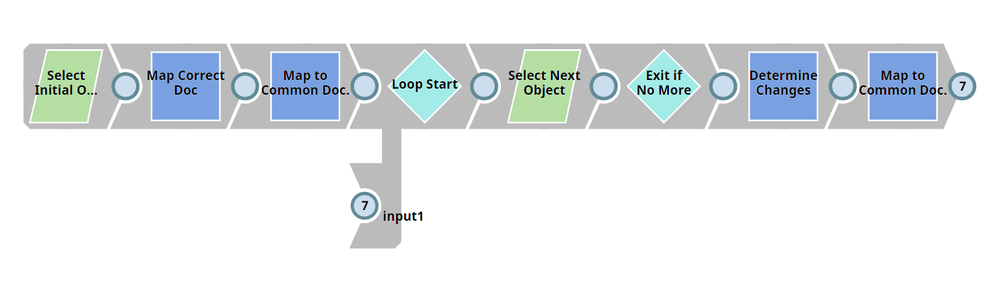

Example basic pipeline pictures. The pipeline in the image, and the one I have been working, will not prepare when the second input to the union is connected, in both validation and execution. This would seem to indicate that this type of operation is not allowed/available, regardless of whether it would function.

In this image, the selection gets the first edit of uniquely identified objects as its own document, each object has its own set of changes of 1 to many. It converts that into a common object. It then enters the loop, where it gets the next edit of the object. If no other edit exists for that distinguishable object, that object alone is removed and the loop and will not be processed anymore. If there is an edit, it is compared to determine if there was a change conducted on it that should be applied to the document.

After the determination, the document is mapped back to match the common object with changes applied. It then re-enters the loop and gets the next edit.

This is repeated as long as one document has anther edit to retrieve. It essentially is a loop which if implemented in Java (or other language) would be easy enough, but within Snaplogic causes issues.

So the question is, what are the alternatives to do this within Snaplogic exclusively without outside resources?

This seems like it would be a powerful tool if loops like this were allowed, it would open up a possibility of non linear processing, but perhaps there is something I am missing here that would enable similar functionality without the need for a loop.

An alternative might be to call a child pipeline, but would need to send in the object, and could be many calls.

Another alternative may be a nested child pipelines? Where the object is passed in, and continues down through children until there are no more results for edits to the object. But this would seem costly as there could be millions of child pipelines being executed.

Any ideas here? If something is lacking explanation I am more than happy to elaborate.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-24-2020 12:10 AM

Hi @jacob.ramsey,

In general, you should avoid loops with SnapLogic, as it’s not a programming language and the behavior could be unexpected in such cases.

Anyhow, if you can replace the “Select Initial O…” with some data put in a json generator snap and attach the pipeline (.slp file), would be much easier for the community to give you a good answer.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-02-2020 08:01 AM

Hi Jacob,

Good day, I agree with Sir Igor avoid loops within you pipeline flow

Usually when I encounter this kind of scenario I will put the streaming documents in an array (before using Group snap) now there’s an easy way by using Gate snap once all streaming documents have been contained in an array you can do you loop by invoking .map() , then at the end of your transformation/validation/etc. use a JSON splitter to stream each item in the array 🙂

- Slicing Data from JSON in Designing and Running Pipelines

- How do I send a null value in a SOAP execute? in Designing and Running Pipelines

- Discover What's New in the SnapLogic April 2024 Release! in Release Notes and Announcements

- CSV Formatter not allowing ISO-LATIN-1 in Designing and Running Pipelines

- Find & Replace using a SOAP Execute to a Mapping Table in Workday in Designing and Running Pipelines