- SnapLogic - Integration Nation

- Designing and Running Pipelines

- How to pass a variable along the pipeline?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to pass a variable along the pipeline?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-17-2019 01:00 PM

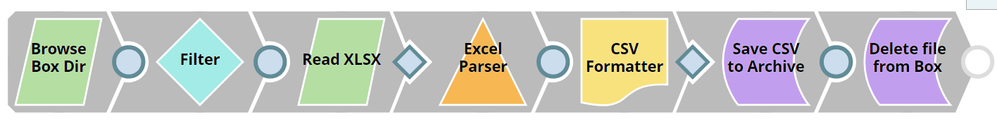

I have a pipeline that looks like this:

The Snaps are:

- List all files in a given Box directory

- Filter the files according to a mask

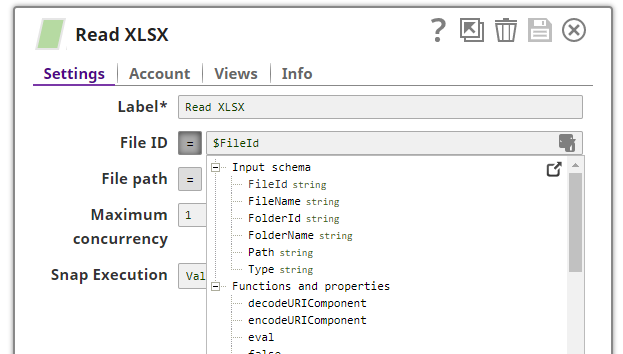

- Read the matching Excel file into the pipeline

- Convert the first Worksheet to CSV

- Write a CSV file to an archive folder in Box

- Delete the original Excel file

Everything is working up to step 5, where I need to access the output variables of step 2 again, the FileName is needed to create the matching file name in the archive folder (except this time with a .csv extension) and then the FileId is needed in step 6 in order to delete the Excel file.

The business reason behind doing this is that the file coming in to the Box folder will have a date prefix at the front of it. There is no set frequency for this file, it is practically arriving at random intervals. Therefore, I have no way to hard code the actual file name into the Pipeline and must dynamically check for a new file every day.

How can I store the output fields from step 2 somewhere in memory so that the final two Snaps in the pipeline can access them?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-18-2019 03:55 PM

Hmm, I’m not quite sure what you mean here. The PipeExec snap basically obsoletes the ForEach snap.

That’s not going to be true in the general case. So, we can’t add something so error prone and hope that people only use it in the right situations.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

07-19-2019 03:24 AM

I believe that this would be very helpful if added to the “Parameters and Fields” documentation.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-12-2019 05:32 AM

Thanks @tstack for the suggestion. To be honest I find this quite a common requirement that is very complicated to implement. Parent/Child pipelines are much harder to debug, and it is strangely inconsistent. The Box Read snap outputs the “content location” i.e. the filename, but the CSV/Excel Parser snaps drops everything passed to it, except the file contents. A viable solution could be an option for the CSV/Excel Parser to include the originating filename in its output, much like Alteryx does

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-12-2019 07:58 PM

This is another use case that is effectively asking for this feature. The parallel issue comes up again, of course, but that doesn’t negate the functional requirement.

- Error regarding "Maximum allowed pipeline parameters" in Designing and Running Pipelines

- ELT Load Snap Failure in Designing and Running Pipelines

- split target csv file into more smaller CSV's in Designing and Running Pipelines

- Http Client Snap vs REST Post snap in Designing and Running Pipelines

- SQS Consumer snap is ready all message at pipeline startup in Snap Packs